Verification and Validation

Steven Zeil

Abstract

Verification & Validation: any activities that seek to assure that a software system meets the users’ needs.

The principle objectives are

-

the discovery of defects in a system, and

-

the assessment of whether or not the system is usable in an operational situation.

The most familiar form of V&V is testing.

Through the rest of this module, we will indeed be taking a close look at testing, unit testing in particular. Before doing that, however, it’s worth noting that testing is not the only way to do V&V.

We will look at various forms of

- code review and inspection,

- proof, and

- static analysis

as alternatives or, more often, supplements to testing.

1 The Process

Verification & Validation

-

Verification:

- “Are we building the product right”

- The software should conform to its (most recent) specification

-

Validation:

- “Are we building the right product”

- The software should do what the user really requires

Testing

-

Testing is the act of executing a program with selected data to uncover bugs.

- As opposed to debugging, which is the process of finding the faulty code responsible for failed tests.

-

Testing is the most common, but not the only form of V&V

What Can We Find?

-

Fault: A defect in the source code.

-

Failure: An incorrect behavior or result.

-

Error: A mistake by the programmer, designer, etc., that led to the the fault.

Industry figures of 1-3 faults per 100 statements are quite common.

2 Non-Testing V&V

Static Verification

Verifying the conformance of a software system and its specification without executing the code

-

Involves analyses of source text by humans or software

-

Can be carried out on ANY documents produced as part of the software process

-

Discovers errors early in the software process

-

Usually more cost-effective than testing for defect detection at the unit and module level

-

Allows defect detection to be combined with other quality checks

Static verification effectiveness

It has been claimed that

-

More than 60% of program errors can be detected by informal program inspections

-

More than 90% of program errors may be detectable using more rigorous mathematical program verification

-

The error detection process is not confused by the %existence of previous errors

2.1 Code Review

Inspecting the code in an effort to detect errors

-

Desk Checking

-

Inspection

2.1.1 Desk Checking

-

An exercise conducted by the individual programmer.

-

“Playing computer” with the aid of a listing.

- Values of variables are tracked using pencil and paper as the programmer moves step-by-step through the code.

-

Can be done with pseudocode, diagrams, etc. even before code has been written

-

A good way to find fundamental flaws in algorithms, especially before actually writing the code.

-

Useful in checking the results of an intended change during debugging.

2.1.2 Inspection

-

Formalized approach to document reviews

-

Intended explicitly for defect detection (not correction)

-

Defects may be logical errors, anomalies in the code that might indicate an erroneous condition (e.g. an uninitialized variable) or non-compliance with standards

Inspection pre-conditions

-

A precise specification must be available.

-

Team members must be familiar with the organization standards.

-

Syntactically correct code must be available.

-

An error checklist should be prepared.

-

Management must accept that inspection will increase costs early in the software process.

-

Management must not use inspections for staff appraisal.

Inspection procedure

- System overview presented to inspection team

-

Code and associated documents are distributed to inspection team in advance

-

Inspection takes place and discovered errors are noted

-

After inspection meeting,

- Modifications are made to repair discovered errors

- Re-inspection may or may not be required

Inspection teams

- Made up of at least 4 members

- Author of the code being inspected

- Reader who reads the code to the team

- Moderator who chairs the meeting and notes discovered errors

- Sometimes a separate “secretary” / “scribe” is used

- Inspector(s) who finds errors, omissions and inconsistencies

Inspection rate

- 500 statements/hour during overview

- 125 source statement/hour during individual preparation

- 90-125 statements/hour can be inspected

- Inspection is therefore an expensive process

- Inspecting 500 lines costs about 40 man/hours effort (\( \geq \$8 \) per line)

Inspection checklists

-

Checklist of common errors should be used to drive the inspection

-

Error checklist is programming language dependent

-

The “weaker” the type checking, the larger the checklist

-

Examples: Initialization, Constant naming, loop termination, array bounds, etc.

Inspection checks

What kinds of faults would appear in a checklist?

-

Data Faults

- Are all variables initialized before use?

- Have all constants been named?

- Should array lower bounds be 0, 1, or something else?

- Should array upper bounds be size of the array or size$-1$?

- If character strings are used, is a delimited explicitly.

- Are all data members initialized in every constructor?

- Is C++’s “Rule of the Big 3” satisfied?

-

Control Faults

- For each conditional statement, is the condition correct?

- Is each loop certain to terminate?

- Are compound statements correctly bracketed?

- In case statements, are all possible cases accounted for?

-

I/O Faults

- Are all input variables used?

- Are all output variables assigned before being output?

-

Interface faults

- Do all function/procedure calls have the correct number of parameters?

- Do the formal and actual parameter types match?

- Are the parameters in the right order?

- If components access shared memory, do they have the same model of the shared memory structure?

-

Storage Mgmt Faults

- If a linked structure is modified, have all links been correctly assigned?

- If dynamic storage is used, has space been allocated correctly?

- Is space explicitly deallocated after it is no longer required?

- Are all pointer data members deallocated in the destructor?

-

Exception Mgmt Faults

- Have all possible error conditions been taken into account?

-

Stylistic/standards Faults

- Are names understandable?

- Does code conform to standards for commenting?

- Does code provide capturable outputs for testing?

- Does code take advantage of possible re-use?

2.2 Mathematically-based verification

-

Verification is based on mathematical arguments which demonstrate that a program is consistent with its specification

-

Programming language semantics must be formally defined

-

The program must be formally specified

Program proving

-

Rigorous mathematical proofs that a program meets its specification are long and difficult to produce

-

Some programs cannot be proved because they use constructs such as interrupts.

- These may be necessary for real-time performance

-

The cost of developing a program proof is so high that it is not practical to use this technique in the vast majority of software projects

Program verification arguments

-

Less formal, mathematical arguments can increase confidence in a program’s conformance to its specification

-

Must demonstrate that a program conforms to its specification

-

Must demonstrate that a program will terminate

Model Checking

-

Simplified models on which properties can be proved

- FSA

- Markov Chains

-

Focus on properties short of correctness

- e.g., avoiding race conditions

-

Machine-Assisted

2.3 Static analysis tools

-

Software tools for source text processing

-

Try to discover potentially erroneous conditions in a program and bring these to the attention of the V & V team

-

Very effective as an aid to inspections. A supplement to but not a replacement for inspections

Static analysis checks

What kinds of faults can be detected by static analysis?

-

Data faults

- Variables used before initialization

- Variables declared but never used

- Variables assigned twice but never used between assignments

- Possible array bounds violations

- Undeclared variables

-

Control faults

- Unreachable code

- Unconditional branches into loops

-

Interface faults

- Parameter type mismatches

- Parameter number mismatches

- Function return values unused

- Uncalled functions and procedures

-

Storage mgmt faults

- Unassigned pointers

- Pointer arithmetic

Stages of static analysis

-

Control flow analysis.

- Checks for loops with multiple exit or entry points, finds unreachable code, etc.

-

Data use analysis.

- Detects uninitialized variables, variables written twice without an intervening assignment, variables which are declared but never used, etc.

-

Interface analysis.

- Checks the consistency of routine and procedure declarations and their use

-

Information flow analysis.

- Identifies the dependencies of output variables. Does not detect anomalies itself but highlights information for code inspection or review

-

Path analysis.

- Identifies paths through the program and sets out the statements executed in that path. Again, potentially useful in the review process

-

These stages generate vast amounts of information.

- Must be used with care.

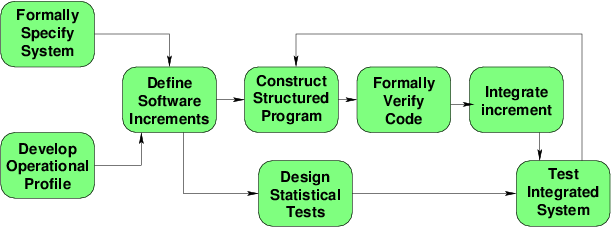

2.4 Cleanroom software development

The name is derived from the ‘Cleanroom’ process in semiconductor fabrication. The philosophy is defect avoidance rather than defect removal.

- Software development process based on:

- Incremental development

- Formal specification.

- Static verification using correctness arguments

- Statistical testing to determine program reliability.

- Testing is actually forbidden!

- Code generation is suppressed.

- Compilers used for syntax/semantic checking only.

Cleanroom process teams

- Specification team.

-

Responsible for developing and maintaining the system specification

- Development team.

-

Responsible for developing and verifying the software. The software is NOT executed during this process

- Certification team.

-

Responsible for developing a set of statistical tests to exercise the software after development.

Reliability growth models used to determine when reliability is acceptable

-

Results in IBM have been very impressive with few discovered faults in delivered systems

-

Some independent assessment shows that the process is no more expensive than other approaches

- But no controlled studies

- And their assessment of other approaches is questionable

-

Fewer errors than in a ‘traditional’ development process

-

Not clear how this approach can be transferred to an environment with less skilled or less highly motivated engineers