Test-Driven Development

Steven J Zeil

Abstract

Test-Driven Development treats unit testing as an integral part of the design and implementation process. Often summarized as “test first, code after”, TDD is actually a recognition that in writing tests, we are

- exploring the API design, and

- anticipating design issues, as well as

- providing for effortless validation of our upcoming implementation.

1 Test-First Development

With our new knowledge of unit-testing frameworks, ideally, we have made it easier to write self-checking unit tests than to write the actual code to be tested.

- We encourage an approach of writing the tests before writing the code.

1.1 Debugging: How Can You Fix What You Can’t See?

The test-first philosophy is easiest to understand in a maintenance/debugging context.

-

Before attempting to debug, write a test that reproduces the failure.

-

How else will you know when you’ve fixed it?

-

From a practical point of view, debugging generally involves running the buggy input, over and over, while you add debugging output or step through with a debugger.

- You want that to be as easy as possible.

- Doing this at the unit level, instead of in the context of the entire system, is often a dramatically better use of your time and effort.

1.2 Test-Writing as a Design Activity

Every few years, software designers rediscover the principle of writing tests before implementing code.

Agile and TDD (Test-Driven Development) are just the latest in this long chain.

-

Writing tests while “fresh” yields better tests than when they are done as an afterthought.

-

Thinking about boundary and special values tests helps clarify the software design

- Reduces the number of eventual faults actually introduced

-

Encourages designing for testability

- Making sure that the interface provides the “hooks” you need to do testing in the first place.

1.2.1 Tests are Examples

“If it’s hard to write a test, it’s a signal that you have a design problem, not a testing problem. Loosely coupled, highly cohesive code is easy to test.” – Kent Beck

-

In writing a test, you are actually writing sample code of how the unit’s interface can be used.

-

Valuable as documentation

-

-

It’s very common when writing tests to discover that the interface is incorrect or inadequate.

- Interface may not have parameters to supply needed data, or may have the wrong parameters.

- Functions may be missing that would manipulate the ADT state.

- Functions may be missing that owuld allow us to examine the ADT state to see the effects of a manipulation

-

The very act of trying to write black-box tests becomes itself an exercise in validation of the interface design!

1.3 The Cycle of Unit Test Failures

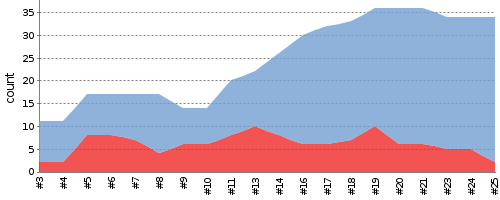

Here you can see a plot of test cases on the vertical axis versus time (actually, commits to the version control system) on the horizontal axis during a project on which I practiced TFD.

Tests passed are shown in blue and failed tests are shown in red.

Notice the repeated pattern:

-

There are multiple sudden rises in the number of tests failed.

- These are matched by a simultaneous and equal increase in the total number of tests. So what we are seeing is the additon of new tests that we, initially, fail.

- That’s because in TFD we write tests first for code that we have not yet developed. Naturally, we fail those tests.

-

Each such rise is followed by an eventual decrease in the number of failed tests while the total number of tests stays constant (so the blue area grows).

-

That’s because, after developing the new tests, we started working on implementing the new funcitonality, eventually passing most of those new tests.

-

-

There remains, through most of the project, a base set of red tests that we never quite pass.

- That’s because I am often adding both unit and integration tests.

- Unit tests will test our modified ADT(s) in isolation from the rest of the system. They can be passed as soon as we finish the ADT modifications.

- Integration tests test the modified ADT(s) together with other portions of the system. We can’t pass these until later in the project when those other portions of hte system have actually been implemented.

- You can see, near the end of the project time, that the “stubborn” base of failed tests is finally starting to decrease.

2 TFD during Incremental Development

My stereotypical division of a story into tasks is typically

- Create/modify the API to describe a new desired behavior.

- Write the unit tests.

- Implement the new behavior.

- Integrate and commit changes.

Compare this to the steps of TDD, above, and you can see that they are compatible.

2.1 Case Study: TFD of a Spreadsheet Story

Here are some short videos illustrating my application of task 1 and task 2 of a story for the Embeddable Spreadsheet project.

3 Test-Driven Development

Test-Driven Development (TDD) is a stronger form of Test-First Development.

In TDD, we repeatedly:

-

Write an automated test case for a new desired behavior.

- This case must, initially, fail.

- Not compiling counts as “failing”.

-

Write just enough new code to pass the test.

-

Refactor the code to make it acceptable quality.

This ties in very nicely with some of our previous discussion of incremental development. In particular, compare to the way we break stories into tasks.

3.1 The “Three Rules of TDD”

From Robert Martin

Over the years I have come to describe Test Driven Development in terms of three simple rules. They are:

- You are not allowed to write any production code unless it is to make a failing unit test pass.

- You are not allowed to write any more of a unit test than is sufficient to fail; and compilation failures are failures.

- You are not allowed to write any more production code than is sufficient to pass the one failing unit test.

You must begin by writing a unit test for the functionality that you intend to write. But by rule 2, you can’t write very much of that unit test. As soon as the unit test code fails to compile, or fails an assertion, you must stop and write production code. But by rule 3 you can only write the production code that makes the test compile or pass, and no more.

If you think about this you will realize that you simply cannot write very much code at all without compiling and executing something. Indeed, this is really the point. In everything we do, whether writing tests, writing production code, or refactoring, we keep the system executing at all times. The time between running tests is on the order of seconds, or minutes. Even 10 minutes is too long.

- Less time spent debugging: the code worked just a minute ago.

- Every hour you are adding multiple tests: tests accumulate quickly

- Less resistance to cleaning up bad/ugly code: you won’t break things (badly). You have the tests!

3.2 Example of TDD

The Bowling Game Kata (from Martin.)